Detection and 3D tracking of frontal dynamic objects are paramount when it comes to an autonomous vehicle. In this regard, the issue can be divided into three major tasks. First, a detection problem through which the surrounding objects should be identified. Then, a tracking module handles a tracking scheme while keeping the computational cost low as much as possible. Finally, a method to estimate/reconstruct the third dimension to get both lateral and longitudinal distances, simultaneously.

Considering state-of-the-art approaches in object detection, the execution speed plays a key role in the case of practical applications. To clarify, utilizing optimization approaches such as the TensorRT library is of high importance to get a satisfactory performance. Thus, one critical aspect of designing a detector or employing a state-of-the-art one is how to implement it on an embedded system while taking both speed and accuracy into consideration, and this is not realized unless by giving significant attention to optimization issues.

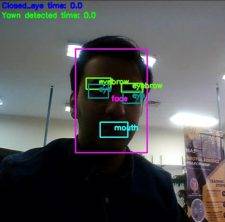

The tracking task on the other hand has its specific challenges. Although execution speed is much more of crucial importance than that of the detector, since the structures are much lighter, it is a great challenge to deal with the tracking problem in a wide range of environmental conditions. Besides, having various sizes, objects are hard to be tracked while keeping the consistency in the performance. Consequently, it is proposed to not only utilize a convolutional neural network-based tracker but also to consider a flexible structure along with a specific training process to get proper outcomes.

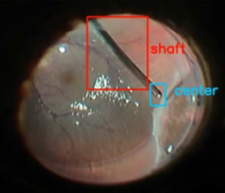

Being one of the most challenging problems in computer vision, depth estimation has become a significant subject of interest among researchers. Utilizing a hybrid approach of nonlinear observers along with deep learning approaches, and end-to-end deep convolutional neural networks are the two main solutions pursued in our group to realize the depth estimation. In the former one, as it has been mentioned, the purpose may be achieved through employing an image-based observer alongside deep learning approaches. Although deep learning methods are proved to be useful in obtaining the depth map from a single image, due to the computational cost and also the dynamic environment, the proposed hybrid approach may result in better performance in terms of speed and applicability in practice. Since both the camera and the objects are moving with respect to each other, the issue can be represented as a structure from motion (SFM) problem. To deal with this problem, several approaches are presented in the literature based on unknown input observers. However, none of them are fully robust against the model uncertainties. Moreover, due to the lack of a suitable approach in extracting information from images, there are limitations in their practical implementation. As a result, it is proposed to not only employ a newly developed switched SDRE filter but also utilize a deep CNN based tracker in eliciting observations from images. On the other hand, occlusions, blurred images, etc may result in intermittent measurements that yield unstable error dynamic for an observer. In this regard, a robust object tracking scheme based on recurrent neural networks is suggested to handle such cases.

In the evaluation process, each of the proposed methods is first implemented and tested through simulators like Carla. Thereafter, real-world experiments are conducted where to evaluate depth estimations, a radar sensor is utilized. Through these experiments, various embedded systems such as NVIDIA Jetson boards are utilized for different applications considering the demand of the computational load. Furthermore, one common approach in the implementations is to use a multi-threaded framework to further enhance the performance of the system.

All the items, tasks, etc mentioned above are directly related to my Ph.D. thesis. However, my interests go beyond the autonomous vehicle application. I strongly have a passion for employing deep learning approaches especially deep CNNs in different applications such as medical image processing. For instance, the utilization of GANs with the aim of unsupervised learning and producing new artificially datasets via them while the synthetic dataset does not properly replace the real data. Furthermore, these neural networks can be used to further enhance the training level of each of the aforementioned approaches from different aspects.